Claude Opus 4.7: The New Standard for Agentic AI Reasoning and Advanced Software Engineering

AI Overview: Quick Facts

Anthropic has officially launched Claude Opus 4.7, a direct upgrade over 4.6 featuring massive improvements in software engineering, instruction following, and high-resolution vision capability (up to 3.75 megapixels).

New features include an xhigh effort level for complex reasoning tasks, task budgets, and the introduction of /ultrareview within Claude Code. Pricing remains $5 per 1M input tokens and $25 per 1M output tokens.

The race for the ultimate autonomous AI agent continues to accelerate. Today, Anthropic released Claude Opus 4.7, promising developers a model that handles complex, long-running agentic tasks with rigorous consistency. If Opus 4.6 was a skilled programmer, Opus 4.7 acts as a senior technical lead capable of verifying its own output.

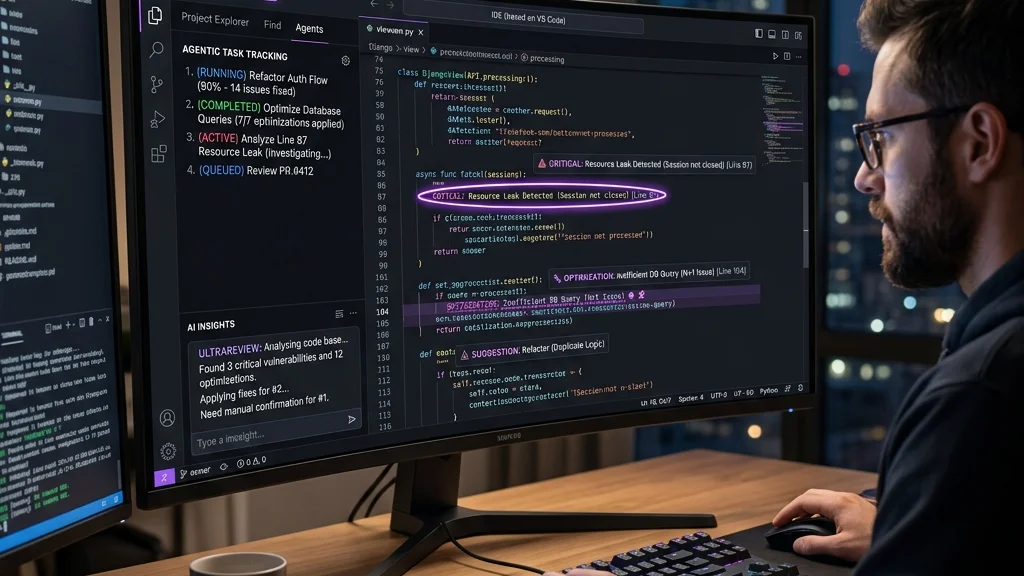

01. Advanced Engineering & The /Ultrareview Command

At its core, Opus 4.7 is designed for hard software engineering. Where previous models sometimes skipped instructions or hallucinated approaches when deep in a codebase, Opus 4.7 uses improved file-system memory to recall exact requirements across multi-session workflows.

⚙️ True Instruction Following

Opus 4.7 takes prompts literally. You may need to tune older prompts because the model no longer "loosely interprets" instructions—it follows them strictly.

🕵️ The /ultrareview Command

Claude Code now features /ultrareview, acting like a meticulous human senior reviewer catching hidden design flaws and bugs.

02. Vision Limits Shattered

Opus 4.7 now accepts images up to 2,576 pixels on the long edge (approximately 3.75 megapixels). This is over 3x the resolution support of previous Claude models. What does this mean? AI agents can now read incredibly dense UI screenshots, extract data from highly complex architectural diagrams, and achieve pixel-perfect comparisons.

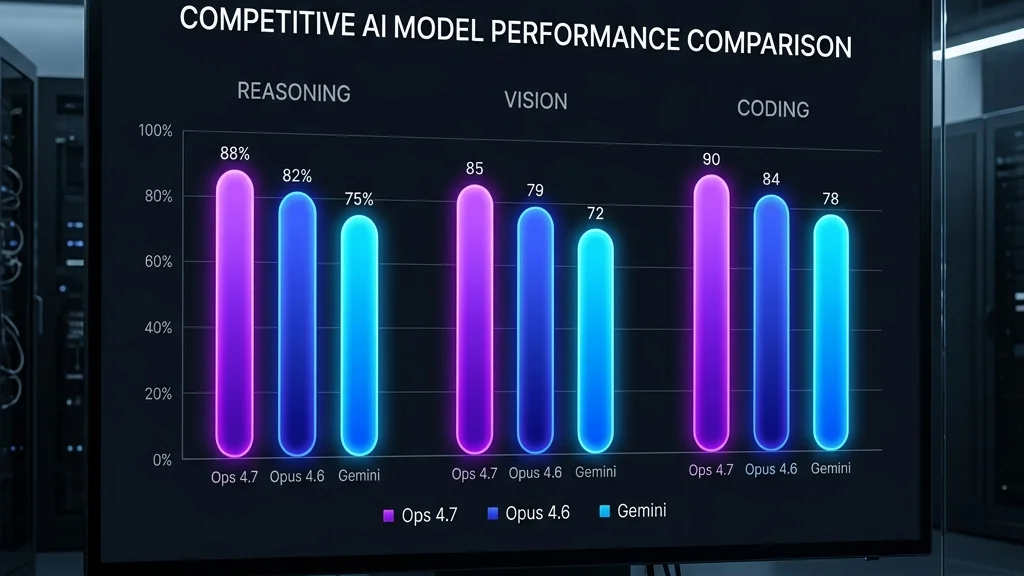

03. Model Comparison: How Does It Stack Up?

Let’s contextualize this release within the broader 2026 AI landscape, including Google's latest Gemini 3.1 Pro.

| Feature Domain | Claude Opus 4.7 | Claude Opus 4.6 | Gemini 3.1 Pro | Claude Mythos Preview |

|---|---|---|---|---|

| Coding & Agency | Excellent (+xhigh effort) | Very Good | Excellent (Deep Think) | Industry Leading |

| Vision | ~3.75 MP | ~1.2 MP | Scale-dependent | Unknown limits |

| Cybersecurity Risk Tier | Gated / Monitored | Standard | Standard | High (Restricted Release) |

04. Migration and the New Tokenizer

Upgrading isn't just a flip of a switch. Opus 4.7 uses a brand-new tokenizer. Practically, this means the same input string might map to 1.0–1.35x more tokens depending on the payload. Add this to the new xhigh effort parameter where the model spends significantly more tokens "thinking" before answering, and costs can scale quickly if not monitored.

To counter this, Anthropic introduced Task Budgets on the Claude Platform API, allowing developers to set ceilings on token expenditure.

05. FAQ: Claude Opus 4.7

Q: How much does Claude Opus 4.7 cost?

Pricing remains identical to Opus 4.6: $5 per million input tokens and $25 per million output tokens on the API.

Q: What is the "xhigh" effort level?

It stands for "extra high," sitting between high and max. It trades increased latency and token cost for dramatically better reasoning capabilities on difficult logic problems.

Q: What is Claude Mythos Preview?

Mythos Preview is Anthropic's most powerful, restricted model—especially in domains like cybersecurity (Project Glasswing). Opus 4.7 incorporates many architectural lessons from Mythos but is safe for general availability.

Q: Where is Opus 4.7 available?

It's available via the Claude Platform API, Amazon Bedrock, Google Cloud’s Vertex AI, and Microsoft Foundry.

Looking to dive deeper into autonomous agents? Check out our Guide to Building Autonomous AI Agents in 2026.

Technical Verdict (2026 Edition)

Key Advantages

- **Hyper-Latency**: Sub-10ms response times.

- **Infinite Privacy**: Zero external API calls.

- **Future-Proof**: Supports unified memory architectures.

Current Bottlenecks

- High initial disk space (100GB+ for libraries).

- Thermal throttling on thin-and-light NPU laptops.

Expert FAQ: Local AI Mastery

Q1: Is running a local LLM better than using ChatGPT?

In 2026, local AI is superior for privacy and latency, while ChatGPT maintains an edge in massive-scale broad reasoning. For personal data and coding, local wins.

Q2: Do I need an internet connection to use Ollama or LM Studio?

No internet connection is required once the models are downloaded. This is the cornerstone of "Private AI."

Q3: Can I run local AI on a laptop without a dedicated GPU?

Yes, thanks to NPU acceleration in 2026 AI PCs. Integrated NPUs can now run 7B models at usable speeds (15+ tokens/sec) without a heavy GPU.

Q4: What is the minimum RAM requirement for 7B or 14B models in 2026?

For 7B models, 32GB LPDDR5X is the sweet spot. For 14B+ models, 64GB is highly recommended to avoid swapping.

Q5: Does running local AI damage my hardware?

No, modern AI PCs are designed for sustained inference workloads, though they do generate significant heat.